Few technologies have moved from the fringe to the fundamental as quickly as AI. The speed has been relentless. Today, AI is embedded in your stack, your workflows, your vendors, and the tools your employees rely on every day, processing the very data your organization is responsible for protecting.

AI adoption across industry lines has happened faster than most security programs were built to handle, and faster than most governance frameworks were designed to absorb.

That kind of reliance creates a new kind of risk. And with that risk comes a new kind of accountability. Boards are asking tougher questions, regulators are accelerating oversight, and customers are demanding clear assurances around AI governance. Which means every CISO and GRC leader is now expected to have a clear answer to a question that barely existed three years ago: Can you prove your AI is trustworthy?

The New Standard: Frictionless AI Trust

There are two core elements to proving trust in AI governance. The first is policy, execution safety, achieving compliance, coordinating across functions, and building real controls into how AI systems are developed and managed.

The second element is proving the maturity of your AI governance program to prospects, customers, vendors, and regulators. Trust is not only built internally. It is demonstrated externally.

The companies leading in AI governance understand this. They’re not just creating policies. Instead, they’re making governance publicly accessible, modular, and easy to navigate. As a result, trust becomes frictionless rather than procedural.

This shift mirrors what happened in traditional security compliance. Trust Centers transformed how companies demonstrate framework and regulatory compliance. Instead of endless spreadsheets and questionnaire cycles, customers gained access to live dashboards and structured disclosures. As a result, transparency replaced the friction of back-and-forth.

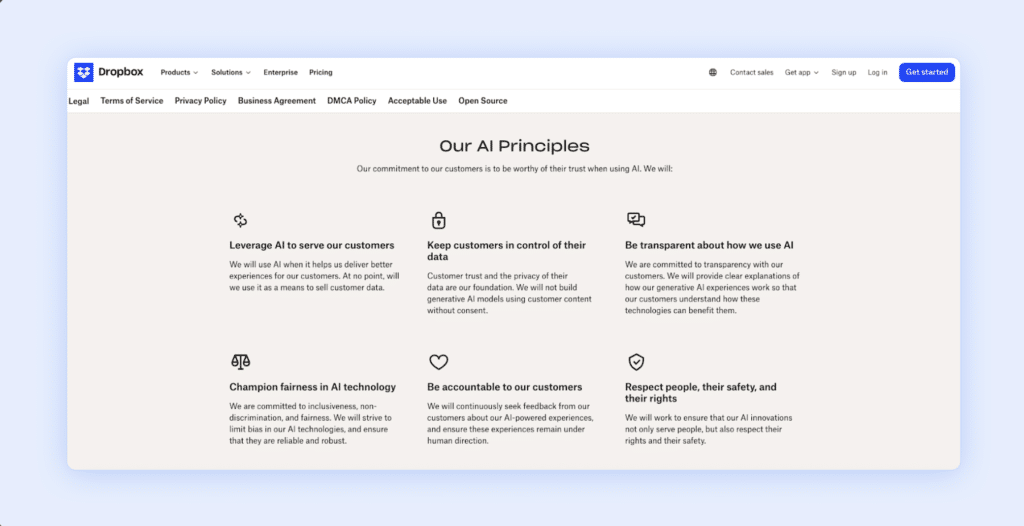

AI governance sections in modern trust centers now follow the same pattern. Leading companies such as Dropbox, Autodesk, and Notion are following a clear blueprint. By breaking governance into modular, transparent sections, they make AI trust easier to understand and evaluate. Below are the five core modules shaping modern AI trust programs.

Module 1: The Model Inventory

AI governance now begins with specificity. It is no longer sufficient to claim that AI is embedded in a product. Buyers and risk teams want clarity on which models are being used, how they are integrated, and what data boundaries exist around them.

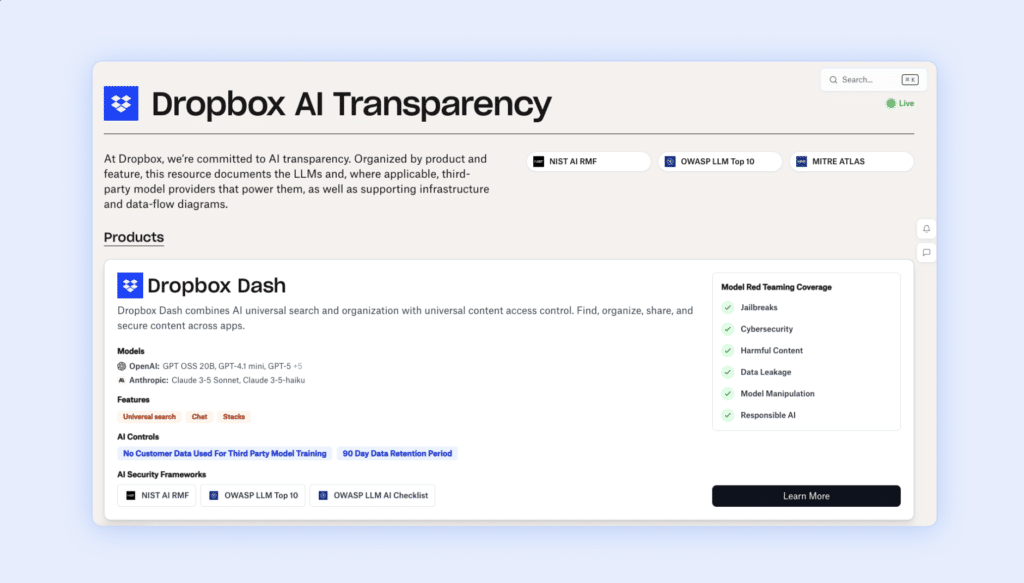

Dropbox’s AI Transparency page reflects this shift. Models are disclosed by product and feature, and the underlying providers are named. Notion similarly clarifies its reliance on models from OpenAI and Anthropic and keeps customers informed as those dependencies evolve. Autodesk, through its Trust Center and Trusted AI documentation, outlines how AI systems operate within a defined governance framework that prioritizes data protection and intellectual property safeguards.

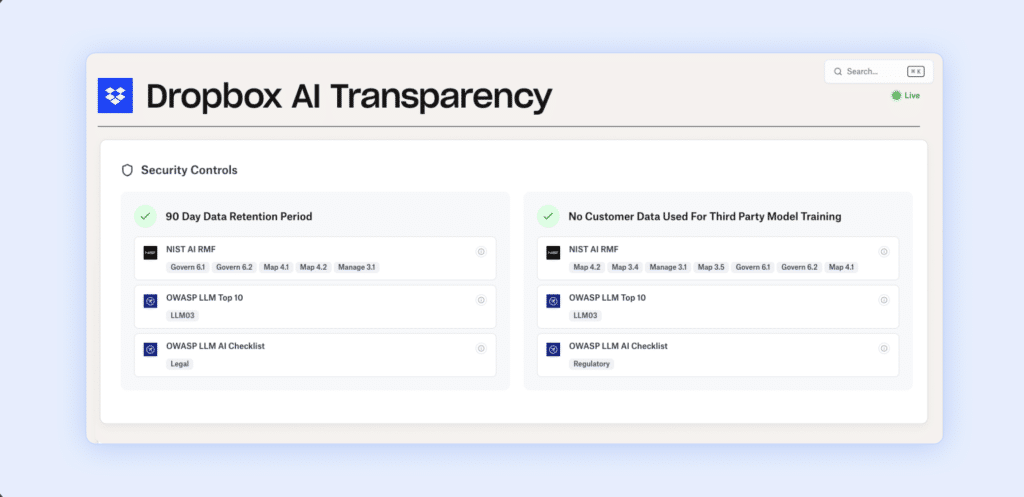

What distinguishes these disclosures is not simply the naming of vendors. It is the inclusion of operational guardrails: defined retention windows, contractual prohibitions on training with customer data, and clear statements about how information flows through the system. When Dropbox specifies that customer data is not used to train third-party models and defines a 90-day retention period, it transforms an abstract privacy commitment into something measurable and reviewable.

Trust conversations now start earlier in the buying cycle and go much deeper, especially during procurement and vendor risk reviews, where questions about models, data usage, access, and retention are inevitable.

Module 2: Standardized Risk Frameworks

AI governance may feel new, but risk management isn’t. Organizations have always relied on standardized frameworks to assess and manage risk across security, legal, and compliance functions. Without a shared vocabulary, AI oversight becomes fragmented and inconsistent.

This is why leading organizations anchor their AI governance programs in recognized standards. Dropbox publicly maps its AI systems to NIST AI RMF, OWASP LLM Top 10, and MITRE ATLAS. That alignment does more than demonstrate diligence. It situates AI risk within frameworks that already carry institutional authority.

NIST AI RMF provides a governance-level structure that aligns AI risk with enterprise risk management. OWASP LLM Top 10 defines concrete threat categories such as prompt injection and data leakage, translating abstract model risks into security-relevant terms. MITRE ATLAS supports adversarial testing and red teaming, operationalizing threat modeling for AI.

By aligning with these standards, organizations can reduce ambiguity.

Module 3: Ethical and Safety Guardrails

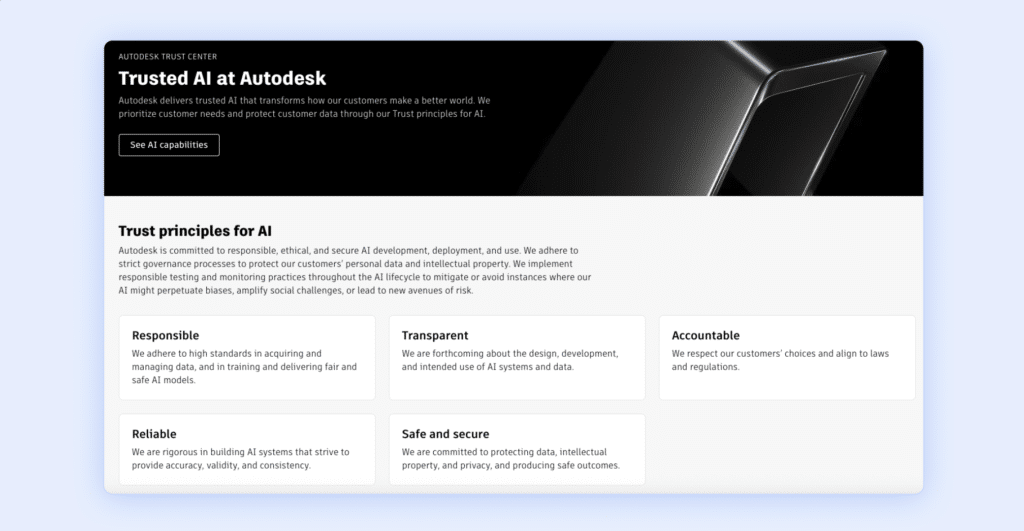

Autodesk, for example, articulates Trust Principles for AI that emphasize responsibility, transparency, accountability, reliability, and security. These principles shape development standards, testing requirements, and monitoring practices across the AI lifecycle.

Publishing this level of detail reflects a broader shift in enterprise expectations. Organizations want governance grounded in evidence, and they are willing to publicly stand behind those commitments.

Once those commitments are visible, they create accountability in both directions. Customers gain assurance, and internal teams are held to a clearly stated standard. Transparency does more than communicate that controls exist; it makes maintaining them non-negotiable.

Module 4: Continuous Monitoring

AI systems are inherently dynamic. Traditional compliance operates in cycles, but AI models are updated, providers change, and behaviors evolve continuously. Static oversight struggles to keep pace with that velocity because these systems are not fixed assets; they are adaptive and constantly evolving.

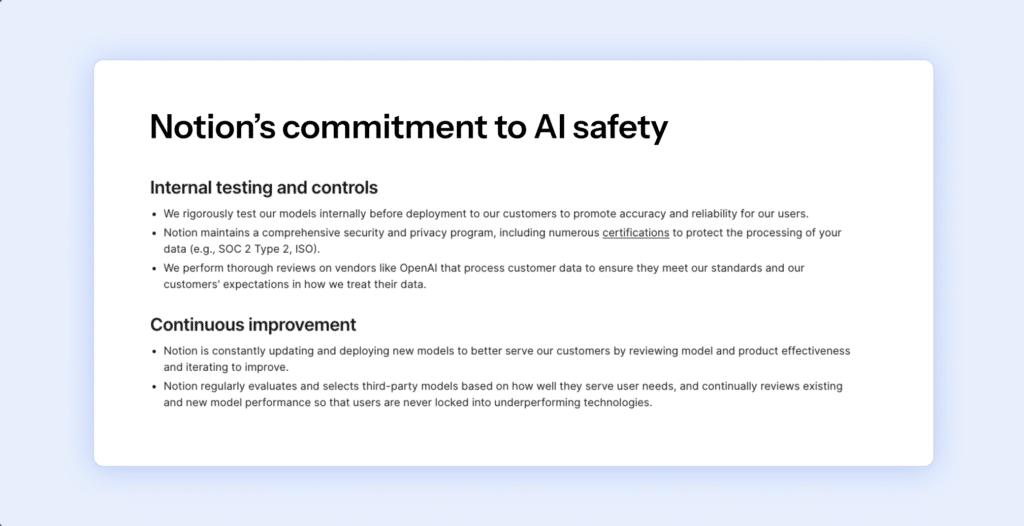

Leading companies are responding by embedding continuous monitoring into their governance structures. Notion regularly evaluates model performance and updates or replaces models based on safety and effectiveness. Dropbox maintains its transparency documentation as a living resource, and Autodesk emphasizes lifecycle monitoring to manage bias and emerging risks.

This approach recognizes that AI risk is ongoing. Continuous controls monitoring enables organizations to track performance, assess bias signals, review red teaming outcomes, and respond to provider changes in real time, bringing governance closer to the engineering lifecycle rather than limiting it to periodic audits.

Module 5: Data Privacy and IP Protection

Among AI-related concerns, data leakage and intellectual property exposure remain the most consequential for enterprises. The risk is not just technical but also strategic, as organizations must ensure that proprietary information does not become part of shared training ecosystems.

Companies addressing this effectively do so at the architectural level. Dropbox states that customer data is not used for third-party model training and defines clear retention limits. Notion contractually restricts subprocessors from training on workspace data by default. Autodesk positions data and IP protection as core objectives of its AI governance program, supported by lifecycle controls and vendor oversight.

More mature programs also introduce controlled flexibility. Notion’s opt-in AI LEAP Program allows customers to participate in model improvement initiatives in exchange for defined benefits, while keeping protection as the default and participation explicit.

At its core, enterprise trust depends on preserving control. When data isolation, retention limits, and training prohibitions are built into governance architecture, privacy becomes an enforceable boundary rather than a general assurance.

Conclusion

Trust is becoming the defining currency of the AI era. As regulation accelerates, buyer scrutiny deepens, and AI systems move further into the core of how businesses operate, the organizations that will lead aren’t just the ones building the most capable AI. They’re the ones who can prove it is trustworthy.

That proof requires real work. The five modules outlined above—model transparency, framework alignment, ethical guardrails, continuous monitoring, and data protection—are the foundation. They represent the internal discipline that trust is built on. But internal discipline, on its own, is invisible. And invisible trust doesn’t move markets, satisfy regulators, or reassure customers.

This is the shift that matters most. Trust only creates value when it is demonstrable. The companies leading in AI governance understand this intuitively. They’re not treating transparency as a communications exercise. They’re embedding it into the structure of their programs, making governance public, modular, and easy to evaluate. In doing so, they’re transforming trust from an internal achievement into an external signal.

For CISOs and GRC leaders, that’s the new mandate. Strong controls remain essential, but the ability to clearly and continuously demonstrate them is what will ultimately define credibility. The question every stakeholder is now asking isn’t whether governance exists. It’s whether you can prove it.

The organizations that answer that question well and answer it early won’t just be compliant. They’ll be trusted. And in the AI era, that is the only competitive advantage that compounds.

Author

Srikar Sai

As a Senior Content Marketer at Sprinto, Srikar Sai turns cybersecurity chaos into clarity. He cuts through the jargon to help people grasp why security matters and how to act on it, making the complex accessible and the overwhelming actionable. He thrives where tech meets business.Explore more

research & insights curated to help you earn a seat at the table.